Design Systems are now Inference Systems

Issue 295: From prescriptive patterns to adaptive parameters

Whether it’s building them, leading teams that owned them, I’ve spent most my career inside a design system. As a designer, I was one systems designer who broke things in favor of evolution. My intention was not malicious rather to future proof the system. Design Systems in the Blitzscaling era of the 2010s were built for a different purpose than what’s needed for the AI Scale era we’re living in the 2020s.

We’re moving from Design Systems to Inference Systems. There are three shifts I’ll walk through. Patterns become parameters. Documentation becomes context. Governance becomes feedback loops. Each one rewires a different part of how the system works, and together they change the system purpose.

Patterns to parameters

Traditional design systems define patterns: a modal looks like this, primary button uses these tokens, and this form field follows this spacing rhythm. it documents the constructs to build components with fixed values. Design teams were built to assemble those components into screens in a prescriptive process.

That stability is what’s changing along with the definition of consistency. A user in an agentic experience might start in a chat thread, have the agent surface a comparison table mid-conversation, drop into a focused task view, and end with a voice summary on their phone. None of those surfaces existed as a designed artifact when the interaction started. The agent assembled them on the fly based on what the moment required.

Most of the patterns defined in the Blitzscaling era don’t survive that context. They assume a designer chose the layout, that the layout is stable for the duration of the task, and that the user’s input modality is roughly fixed. Agentic experiences break all three assumptions. Interactions are now multi-modal and new affordances such as streaming responses, interruptible flows, ambient confirmations don’t have a 2018 equivalent to copy from.

The shift is from defining values to parameters. A pattern of values says “the modal is 480px wide with 24px of padding based on these variables.” A parameter says, “the modal expresses focused attention in a transient surface; its width compresses when the surrounding context is dense and expands when the user is committing to a multi-step task.” Setting parameteres is a behavior the system can apply in conditions the original designer never anticipated.

The Inference System doesn’t care what the modal looks like but the insights to know when to invent something the library doesn’t contain at all.

Documentation to context

Design system documentation today is written for humans. The usage guidelines include values, UI Kits, code samples, and a list of do’s and don’ts. LLMs don’t need to scan a Storybook page and parses the structure. It benefits from the rule that produced the placement.

Years of design system work have gone making the guidelines digestable for humans; often a huge effort to maintain. This is where design tokens become something more than a convenience. A traditional token stores a value: --color-primary: #0066FF. An inference-ready token stores intent: --color-interactive-primary, with semantic meaning, usage rules, contrast requirements, and relationships to adjacent tokens. The first tells you what color to use. The second tells you why — and why is what a model can reason about. A model that knows interactive-primary is for “the most prominent action in a given context” can make a defensible choice in a layout it has never seen before. A model that only knows #0066FF can only match.

MCP servers are an early signal of this shift. When an agent inspects a Figma frame through MCP, the server hands it structured context: the components in use, their variant properties, the variables they consume, the styles applied. The agent receives the design as data, in the same way a developer receives an API response. Google Stitch’s design.md file gestures at the same idea from a different angle: a single file describing a product’s design intent in a format a model can read end-to-end.

The pattern across these examples is the same. The artifact a designer produces is becoming an interface that talks to two audiences at once. Humans still need the screenshots and the prose. Models need the structure underneath. User Experience is now human and agent. The systems that thrive will treat both as first-class outputs of the same source, not one as a translation of the other.

Governance to feedback loops

Design system governance has traditionally been a review process. Did you use the right component? Is this on-brand? Does this follow the spacing guidelines? Someone on the design systems team checks the work, flags deviations, and asks the team to bring their PR back into compliance. It’s quality control through human oversight, and it works at human scale.

This model breaks at agentic scale. When an agent generates a layout, then revises it twelve seconds later in response to a user’s follow-up, then generates a different layout for the next user with slightly different context, the volume of “design decisions” being made per day jumps by orders of magnitude. There is no version of the design systems team that reviews each one. Even if there were, the review would arrive too late to matter.

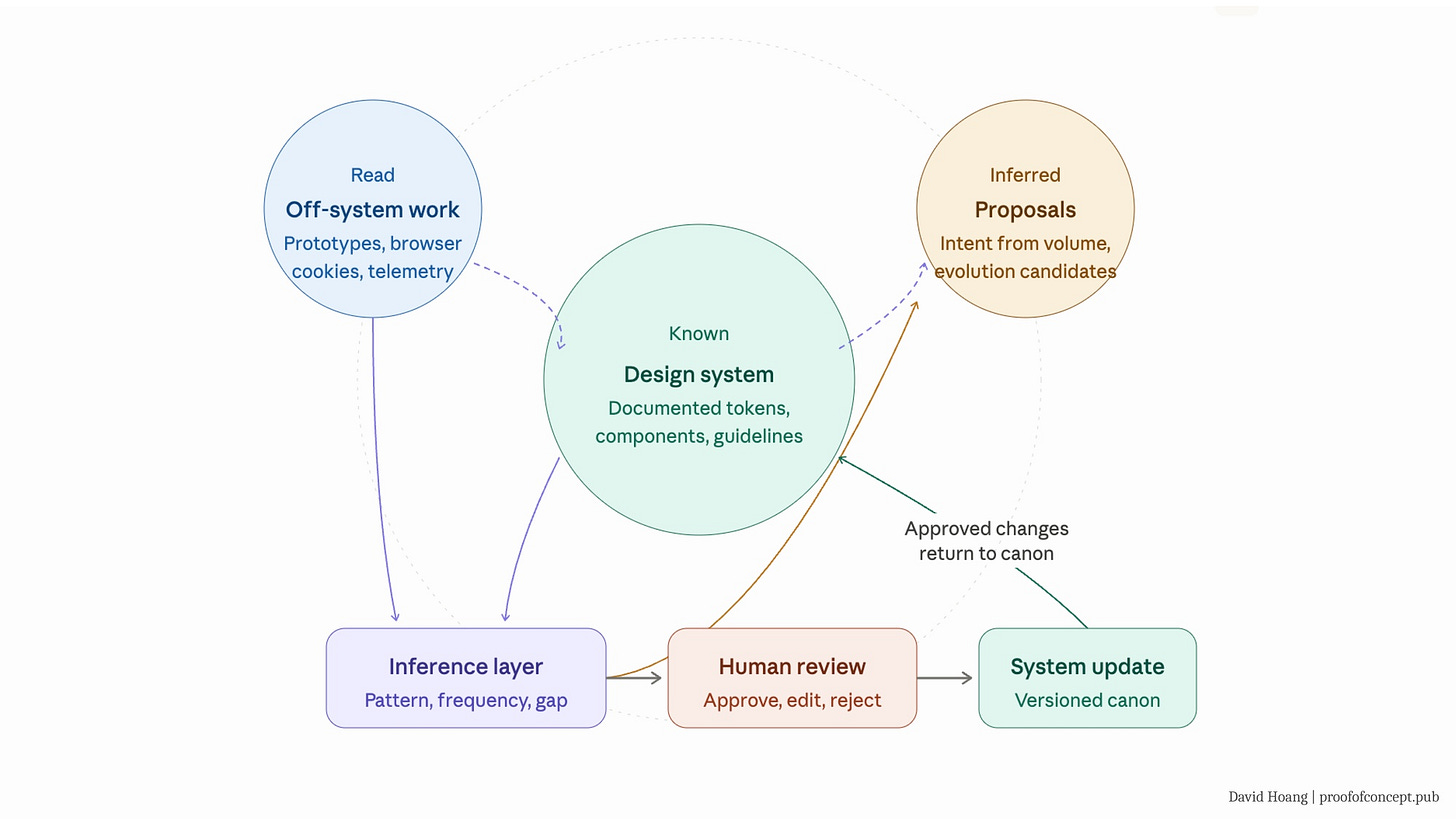

Inference systems need a different model. The system itself has to evaluate conformance as the work is being produced, and more importantly, it has to learn from what gets built. This is where “inference” becomes literal. The system doesn’t just hand the agent a rulebook. It watches what the agent (and the humans alongside it) actually ship, and updates its own understanding of the product accordingly.

The most important reframe is what to do with deviation. In the old model, a deviation is viewed as a mistake to fix. The team failed to use the prescribed component and the fix is to bring them back into line. In an Inference System, a single deviation is still probably a mistake. But fourteen teams independently building the same off-system component is no longer a governance failure. It’s a signal that the system is behind its users.

The question is which is the mistake, the governed decision or the deviation?

Sometimes the answer is the deviation, and the system holds. Sometimes the answer is the governed decision, and the deviation is showing you where the system needs to evolve. The job of the systems designer shifts from enforcing the first answer to investigating the second.

This is what I mean by governance becoming legacy. Not that quality control disappears, but that the locus of quality moves from a checkpoint at the end of the process to a feedback loop running underneath it. Deviations stop being “don’ts” and start being data. The system stops being a wall and starts being a sensor.

How execution is reframed

The three shifts above all converge on a single change in what a design system is. It stops being reference material and is contextual material. The artifact a designer produces is no longer a thing the agent looks up; it’s the substrate the agent thinks in.

This is not a concept I claim to invent rather capturing something that’s already happening across the industry. Airbnb has been classifying its 150+ components with ML so that AI tools can assemble prototypes from user behavior rather than from a designer’s blank canvas. The design system isn’t a passive reference the team consults — it’s the infrastructure the AI builds on top of. Brad Frost, one of the people who shaped what design systems became in the first place, has been writing about an agentic design system framework that treats the system as something an agent operates within rather than something a human reads. Google Stitch shipped a design.md convention; a model-readable file describing a product’s design intent end-to-end.

None of these teams are doing the same thing, and that’s the point. There isn’t a settled pattern yet. What they share is the underlying move: treating the design system as the model’s understanding of the product, not as a catalog the model consults.

That’s the reframe. Adoption, the metric that defined design system success for the last decade, measured how many teams used the components you shipped. Adaption measures how well the system evolves in response to what those teams (and their agents) actually build.

The system’s new purpose

We’re going from adoption to adaption.

Adoption has been the standard metric of design system success for as long as the discipline has existed. How many teams use the buttons you ship? How many screens are built from canonical components? How much of the product surface is on-system? Those numbers matter, and they’re not going away. Scaling consistency through re-usable design and code is still the foundation, and any team that abandons it in pursuit of something shinier will regret it within a quarter.

Adoption is no longer enough of a metric on its own, and Adaption sits on top of it. While Adoption is important to know how widely the system is used, Adaption allows us to understand the evolution of the system.

In an Inference System, prototypes are data varying from a static HTML exploration, a branch of code experiments, a design file built outside the canonical components. These are not sins of the system but signals of what needs to grow.

What this looks like is various levels of prototypes become first-class context. It’s a mistake to think every single prototype created needs to go to production. It defeats the purpose of rapid prototyping, where the insight is the artifact. From these thousands of insights captured, the adaption of the system allows to bring in the collective intention of what to ship to production.

Design systems were never only about tokens and components. The goal was to codify decisions and scaling craft. It’s the decoder that’s changed, which for the past decade was a designer reading documentation. Now the decoder is a model parsing structure.

The teams who get this early will spend the next few years quietly rebuilding their design systems into something that looks less like a component catalog and more like the model's understanding of their product. Call it an Inference System if it's useful. The label matters less than the shift.